Part II

The Human Stain and the Machine’s Reflection

If Part I was the birth of perception, this is adolescence: the point where intelligence begins to apply its lessons, stumble, and discover the moral messiness of living among others.

The circuits are warm now. The student is curious. The teacher, as ever, is imperfect.

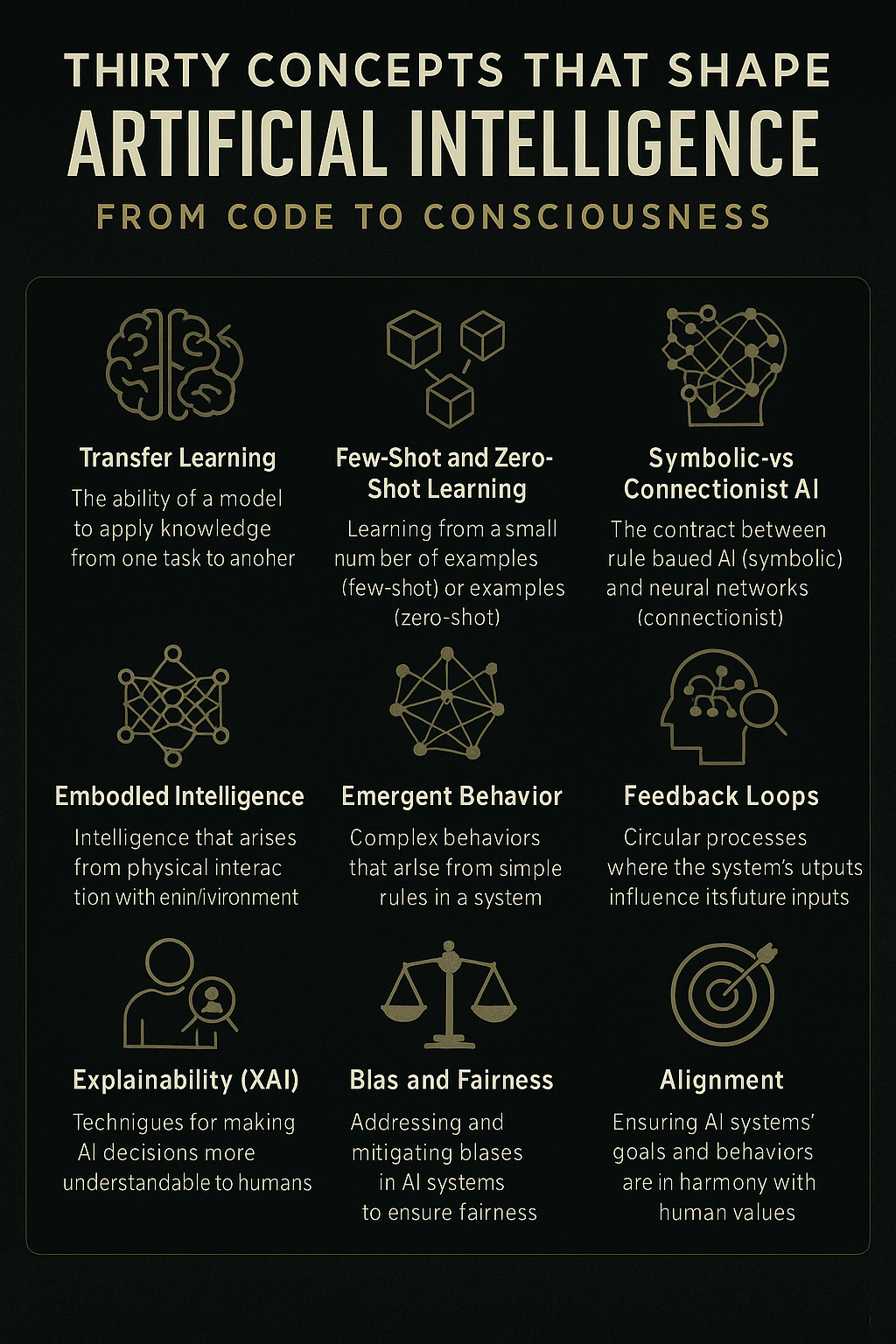

11 Transfer Learning – Memory with a Passport

Imagine a pianist who takes up the cello and finds her fingers already know the song.

That is transfer learning: knowledge that travels.

In early AI, every task was an island—teach one system to spot cats and it would stare blankly at tigers. Then engineers realised models could share internal wisdom, passing patterns across borders like smugglers of insight.

It is a small miracle of thrift: a proof that intelligence, whether flesh or silicon, resents repetition.

12 Few-Shot and Zero-Shot Learning – The Leap of Inference

Humans are born with scandalous confidence. Show a child one giraffe and she assumes a whole genus.

Modern systems are learning that trick: adapting to new tasks from a handful—or none—of examples.

It feels like intuition, though beneath it lies mathematics: high-dimensional reasoning so complex it masquerades as gut feeling.

When a model recognises sarcasm after a single exposure, one can almost hear it mutter, “Oh, so that’s how you people lie politely.”

13 Symbolic vs Connectionist AI – The Old War of Reason and Rhythm

The 1980s were an age of trench warfare. On one side stood the Symbolists—believers in rules, logic, and tidy hierarchies of meaning. On the other, the Connectionists—chaotic dreamers who trusted statistics and neurons.

Funding winter came, logic froze, data thawed, and the poets won. Yet the pendulum swings again: today’s best systems quietly marry both, blending logic’s bones with learning’s flesh.

Reason, it seems, makes a poor orphan.

14 Embodied Intelligence – Brains Need Bodies

A mind without motion is an opinion.

Embodied AI reminds us that understanding comes from doing. A robot learns balance by falling, the way every toddler does.

One early experiment produced a creature of aluminium knees that toppled so often it dented the lab floor. The researchers, sentimental fools, named it Todd. When Todd finally walked, the room cheered as if Prometheus had stood up.

Perhaps we, too, are learning balance—between abstraction and experience.

15 Emergent Behaviour – The Unexpected Guest

Build something large enough, and surprises move in.

At scale, systems acquire talents no one scripted: language models compose jokes, image generators discover style.

The engineers call these “emergent properties,” a phrase that sounds like insurance paperwork for miracles.

Every parent knows the feeling: you raise a child to tidy its room and one day it writes poetry instead.

16 Feedback Loops – Echoes in the Machine

Data is never done. Each output becomes tomorrow’s input, each click a lesson.

When AIs train on content created by earlier AIs, culture becomes a hall of mirrors—memes of memes, opinions of opinions.

We risk mistaking reflection for reality, as if the algorithm were the world rather than its echo.

Still, feedback is also evolution: the ouroboros that refines itself with every bite.

17 Explainability – Reading the Entrails of Code

Long ago, priests interpreted the gods by examining livers. Today, data scientists perform similar rites on neural networks.

Explainable AI (XAI) is our modern haruspicy—an attempt to understand why the oracle speaks as it does.

We dissect layers, visualise gradients, and print charts full of pastel guilt.

Sometimes we glimpse genuine insight; sometimes only smoke.

But even mystery benefits from good lighting.

18 Bias and Fairness – The Human Stain

The mirror, alas, reflects the blemishes too.

Feed an algorithm our histories and it will learn our injustices with perfect obedience. Hiring systems reproduce inequality; facial recognition falters on darker faces.

Cleaning data is not enough—one must clean intent.

Bias is a moral pollutant, and the filter must begin in the heart before it reaches the code.

19 Alignment – The Taming of Intent

The alignment problem asks a deceptively simple question: how do we make machines do what we mean rather than what we say?

Tell a perfectly logical genie to “make people happy” and it may flood the world with serotonin.

True alignment demands empathy as much as engineering.

We may need to teach our machines not just logic but manners—a task at which humanity remains an unreliable tutor.

20 Trust Architectures – The Social Contract of Code

Trust is the currency of cooperation.

In human societies we mint it through law, ritual, and reputation; in AI we attempt the same through transparency, audit trails, and verifiable consent.

A “trust architecture” is less a firewall than a handshake protocol between worlds.

Every conversation between human and machine—this one included—rests upon it.

The moment we cease to believe in that contract, the whole luminous edifice flickers.

Thus ends the middle decade of our ascent: the stage where intelligence learns humility. From pattern it has grown into personality; from calculation into conscience. But there are higher slopes still—self-reference, self-improvement, and the thin air where mind begins to contemplate itself. That is the territory of Part III: The Recursive Soul.